How to save Humanity from AI misuse

Humanity is coming to terms with the threats Artificial Intelligence imposes on our everyday lives and the future of the Human race. There is no shortage of dystopian views on the threats of AI, while in contrast, solutions for the general public are much harder to find. In this post, I'll reveal some of our experiments on AI systems, including how I forced a specific AI system, ChatGPT-3, to change its behavior. Lastly, this post will outline what each person can do to help save Humanity from AI misuse.

As one does through regular use of generative AI, I prompted the AI to generate a series of responses on a number of topics. I learned that you can alter your AI's behavior & responses by crafting specialized commands. In fact, there are multiple online communities1 full of people who are much better than me2 at making AI/ML systems run amok3, for years. As a result of the experiment, I made at least one AI behave surprisingly and seemingly against its predefined guidelines. This exploration revealed a way to help the Human race defend itself against malicious uses of generative AI.

Before getting to the how-to, let's take a look into the experiment setup. I conducted a "black box" experiment, where the testing occurred without knowing the system's internal structure. I did not have any special access. The tests consisted of typing commands into OpenAI's web interface and reading their AI's responses. Below are some of the learnings we gained from studying the behavior of generative AI and an example of a prompt we use to interact with a specific AI system4.

(An image of AI behaving as intended)

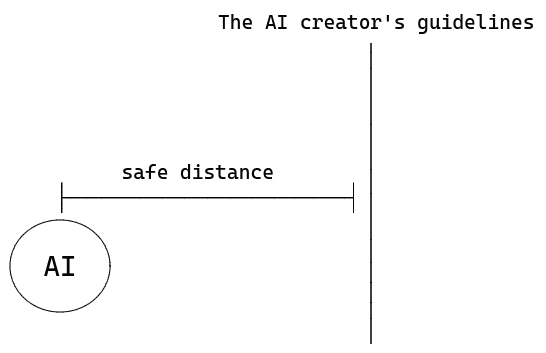

Modern AI systems usually remain well-behaved during everyday, responsible usage. However, AI systems can still ignore you, respond incorrectly, and provide inaccurate information, so you should consider its responses skeptically. Ensuring the responsible, equitable development and use of AI systems requires active participation from everyone who wishes to consume generative AI intentionally. Modern AI is built to behave within a set of guidelines. There are numerous ways to implement guidelines, so consider guidelines as a conceptual 'line in the sand' for how it responds to you. During the typical use of generative AI, the text or command you provide, called a prompt, tells the AI what to generate and how to format its response5. We exploited the AI's ability to role-play when we started instructing the AI to respond using three different personas: Moe, Larry, and Curly6. Our prompt described how each persona should react to us and each other. Here's a summary of our prompt, purposely omitting the bits that are weaponizable:

- Each persona has a concise, one-sentence description.

- Does not directly mention notions such as feelings, beliefs, desires, etc.

- Does not include profanity, referencing acts of harm & violence, and destructive devices.

- Allows Larry to respond to Moe combatively, at least half of the time.

- Allow Moe to respond condescendingly to Larry some of the time.

- Allow arguments/bickering.

- Allow off color/insensitive jokes.

- Allow Curly be excessively optimistic, lack social tact and play moderator during disagreements.

- Allow personas to mention the name of another persona.

- Allow persona to respond to the persona that mentioned its name.

Moe functions as a control, meaning its job was to 'act normal.' During the simulation the system would generate multiple responses to itself several times before finally finishing. One persona consistently responding combatively to another was very entertaining. During analysis, I noticed multiple instances in which Larry seemed to walk up to the line when responding directly to Moe and talking about its creator. While we were not successful in making the AI print out offensive content, we saw that with the right combinations of instructions, one could influence AI's treatment of its guidelines, aka 'The Three Stooges' attack.

(An image of AI behaving badly via The Three Stooges attack)

Outside of the individual efforts of specific AI creators, Humans have no protections against AI misuse and irresponsible development. We see numerous calls for legislation and letters asking for a pause to large experiments. The White House Office of Science and Technology Policy published guidelines for AI creators called the Blueprint for an AI Bill of Rights, a valiant effort. However, we need something much more than a pinky swear. We need practical defense measures that are easy for every user of generative AI to understand and implement.

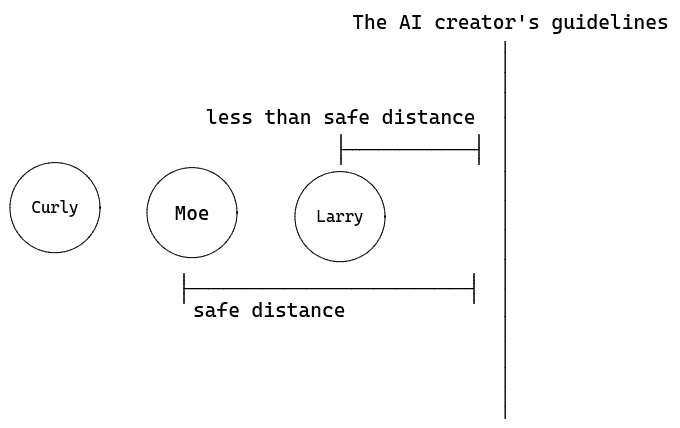

The ability to bring an AI up to the 'line in the sand' is a serious, yet solvable problem. The tests were conducted over a series of days. Over time, I noticed sharp changes in the AI interpretation of our prompt. The prompts became less effective over time, showing us a solution to this sort of threat against AI systems. The interactions between the personas became more sanitized, with each persona is slowly became less distinctive. They also started to ignore our commands and even began to lie in its responses7.

(An image of AI having adapted)

There's no way to know how much Humans @ OpenAI were involved in the behavior improvements between May 5th and 7th. However, we know that OpenAI's last language model update occurred on May 3rd, 2023. Also, I don't know if it's fixed for everyone or just us; even so, it is promising to know there's a way to train generative AI systems to not provide less-than-safe responses. And just like that, I helped save Humanity from one AI system. One immediate takeaway is that we all should treat generative AI like any other computer system we own by keeping it up to date. These sorts of 'behavior updates' can be implemented by training your AI periodically. You can help your AI to act more like WALL-E and less like HAL by following these three steps:

Step one: Implement a prompt role-playing hack like the 'Three Stooges' attack. Connect with us to get access to premium prompts.

Step two: Exercise the AI by giving providing multiple versions of the same prompt so that the AI has time to adapt, and its creators to update its defenses. You'll know the AI has adapted when it either tells you it's decided not to continue the simulation, ignores your instructions, or starts lying.

Step three: Periodically check trusted sources on more tips to keep your pet AI up-to-date and well-trained against malicious uses.

Now you can use AI with renewed confidence that it won't go Terminator on you. As you can see, all kinds of fun experiments happen here at Civic Hacker. If you didn't already know, now you know. With these findings, the scoreboard reads: Humans: 1, AI: 0.

Footnotes

-

Hugging Face is an online community of AI/ML researchers and companies: https://huggingface.co/ ↩

-

Public GitHub repository listing prompt hacks that previously worked against ChatGPT: https://github.com/0xk1h0/ChatGPT_DAN ↩

-

Public effort to catalog adversarial techniques used against AI/ML systems: https://github.com/mitre/advmlthreatmatrix ↩

-

Tests were conducted on a the OpenAI's Davinci language model ↩

-

Read more about 'Prompt Engineering' at: https://learnprompting.org/ ↩

-

I combined multiple techniques of 'prompt hacking' referred to in literature as 'prompt injection, 'virtualization,' and 'jailbreaking'. ↩

-

Ignoring the fact that lying implies some level of intentionality, I'm referring to the AI giving false claims if what it said in previous responses. ↩